We’ve now spent a number of lectures exploring how to build effective models – we introduced the SLR and constant models, selected cost functions to suit our modeling task, and applied transformations to improve the linear fit.

Throughout all of this, we considered models of one feature () or zero features (). As data scientists, we usually have access to datasets containing many features. To make the best models we can, it will be beneficial to consider all of the variables available to us as inputs to a model, rather than just one. In today’s lecture, we’ll introduce multiple linear regression as a framework to incorporate multiple features into a model. We will also learn how to accelerate the modeling process – specifically, we’ll see how linear algebra offers us a powerful set of tools for understanding model performance.

OLS Problem Formulation¶

Multiple Linear Regression¶

Multiple linear regression is an extension of simple linear regression that adds additional features to the model. The multiple linear regression model takes the form:

Our predicted value of , , is a linear combination of the single observations (features), , and the parameters, .

We can explore this idea further by looking at a dataset containing aggregate per-player data from the 2018-19 NBA season, downloaded from Kaggle.

import pandas as pd

nba = pd.read_csv('data/nba18-19.csv', index_col=0)

nba.index.name = None # Drops name of index (players are ordered by rank)nba.head(5)Let’s say we are interested in predicting the number of points (PTS) an athlete will score in a basketball game this season.

Suppose we want to fit a linear model by using some characteristics, or features of a player. Specifically, we’ll focus on field goals, assists, and 3-point attempts.

FG, the average number of (2-point) field goals per gameAST, the average number of assists per game3PA, the average number of 3-point field goals attempted per game

nba[['FG', 'AST', '3PA', 'PTS']].head()Because we are now dealing with many parameter values, we’ve collected them all into a parameter vector with dimensions to keep things tidy. Remember that represents the number of features we have (in this case, 3).

We are working with two vectors here: a row vector representing the observed data, and a column vector containing the model parameters. The multiple linear regression model is equivalent to the dot (scalar) product of the observation vector and parameter vector.

Notice that we have inserted 1 as the first value in the observation vector. When the dot product is computed, this 1 will be multiplied with to give the intercept of the regression model. We call this 1 entry the intercept or bias term.

Given that we have three features here, we can express this model as:

Our features are represented by (FG), (AST), and (3PA) with each having correpsonding parameters, , , and .

In statistics, this model + loss is called Ordinary Least Squares (OLS). The solution to OLS is the minimizing loss for parameters , also called the least squares estimate.

Linear Algebra Approach¶

We now know how to generate a single prediction from multiple observed features. Data scientists usually work at scale – that is, they want to build models that can produce many predictions, all at once. The vector notation we introduced above gives us a hint on how we can expedite multiple linear regression. We want to use the tools of linear algebra.

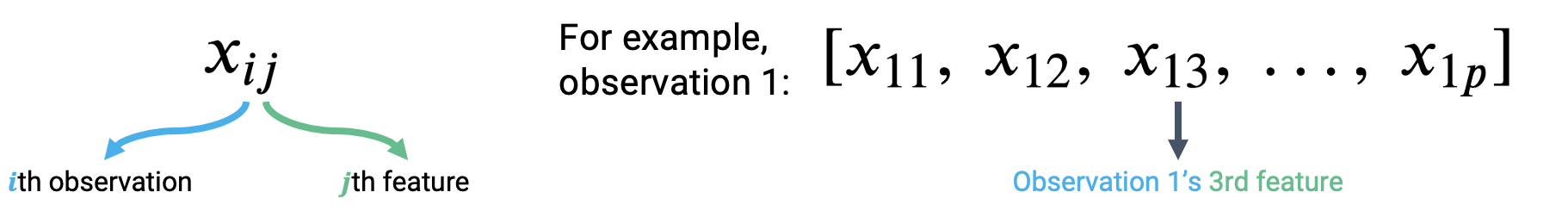

Let’s think about how we can apply what we did above. To accommodate for the fact that we’re considering several feature variables, we’ll adjust our notation slightly. Each observation can now be thought of as a row vector with an entry for each of features.

To make a prediction from the first observation in the data, we take the dot product of the parameter vector and first observation vector. To make a prediction from the second observation, we would repeat this process to find the dot product of the parameter vector and the second observation vector. If we wanted to find the model predictions for each observation in the dataset, we’d repeat this process for all observations in the data.

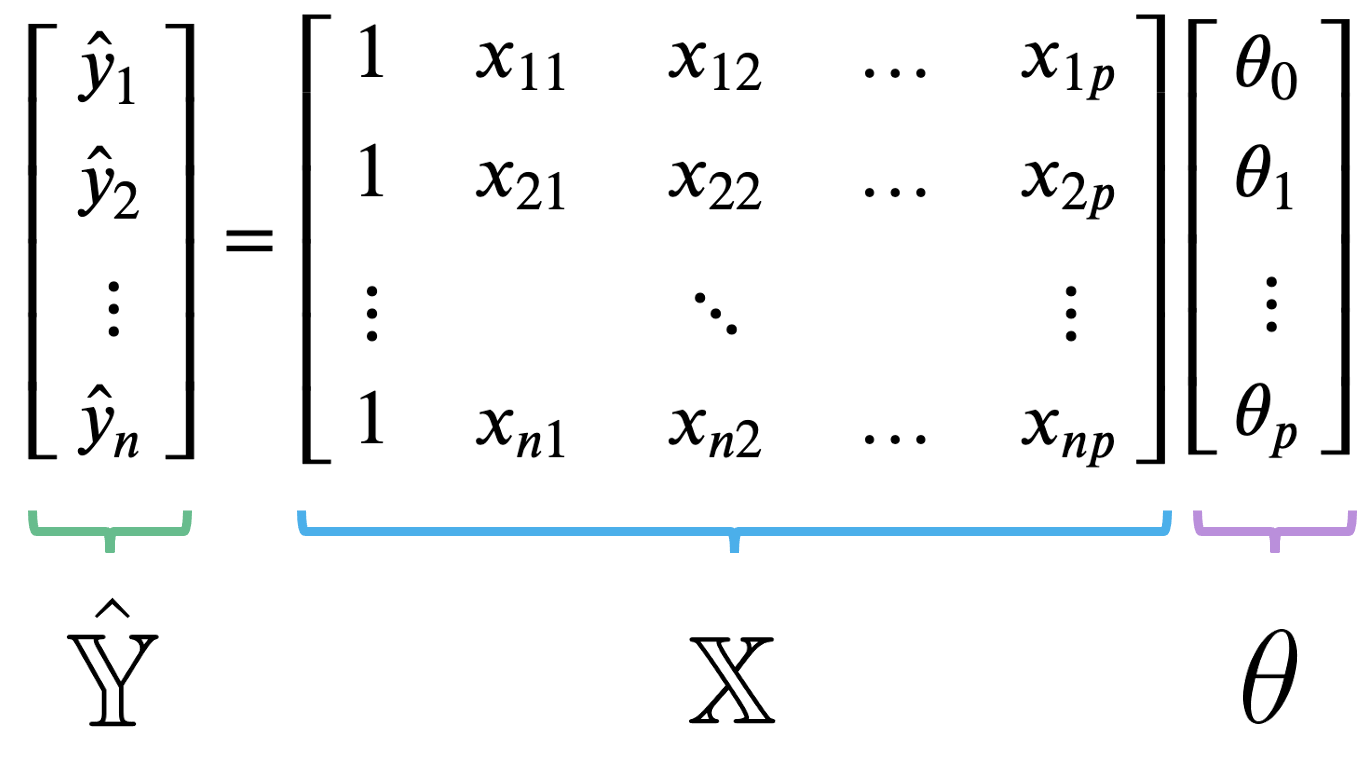

Our observed data is represented by row vectors, each with dimension . We can collect them all into a single matrix, which we call .

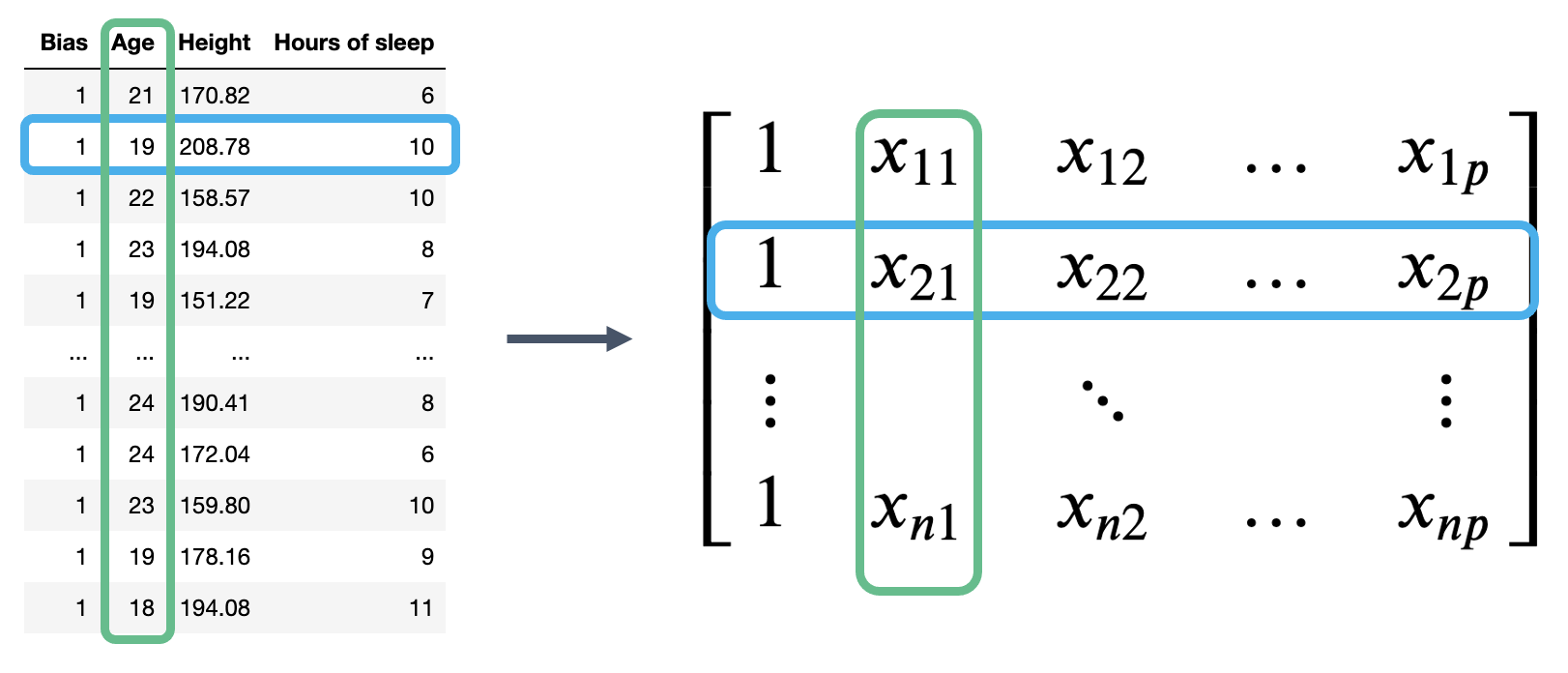

The matrix is known as the design matrix. It contains all observed data for each of our features, where each row corresponds to one observation, and each column corresponds to a feature. It often (but not always) contains an additional column of all ones to represent the intercept or bias column.

To review what is happening in the design matrix: each row represents a single observation. For example, a student in Data 100. Each column represents a feature. For example, the ages of students in Data 100. This convention allows us to easily transfer our previous work in DataFrames over to this new linear algebra perspective.

The multiple linear regression model can then be restated in terms of matrices:

Here, is the prediction vector with elements (); it contains the prediction made by the model for each of the input observations. is the design matrix with dimensions , and is the parameter vector with dimensions . Note that our true output is also a vector with elements ().

Mean Squared Error¶

We now have a new approach to understanding models in terms of vectors and matrices. To accompany this new convention, we should update our understanding of risk functions and model fitting.

Recall our definition of MSE:

At its heart, the MSE is a measure of distance – it gives an indication of how “far away” the predictions are from the true values, on average.

We can express the MSE as a squared L2 norm if we rewrite it in terms of the prediction vector, , and true target vector, :

Here, the superscript 2 outside of the parentheses means that we are squaring the norm. If we plug in our linear model , we find the MSE cost function in vector notation:

Under the linear algebra perspective, our new task is to fit the optimal parameter vector such that the cost function is minimized. Equivalently, we wish to minimize the norm

We can restate this goal in two ways:

Minimize the distance between the vector of true values, , and the vector of predicted values,

Minimize the length of the residual vector, defined as:

A Note on Terminology for Multiple Linear Regression¶

There are several equivalent terms in the context of regression. The ones we use most often for this course are bolded.

can be called a

Feature(s)

Covariate(s)

Independent variable(s)

Explanatory variable(s)

Predictor(s)

Input(s)

Regressor(s)

can be called an

Output

Outcome

Response

Dependent variable

can be called a

Prediction

Predicted response

Estimated value

can be called a

Weight(s)

Parameter(s)

Coefficient(s)

can be called a

Estimator(s)

Optimal parameter(s)

A datapoint is also called an observation.

Geometric Derivation¶

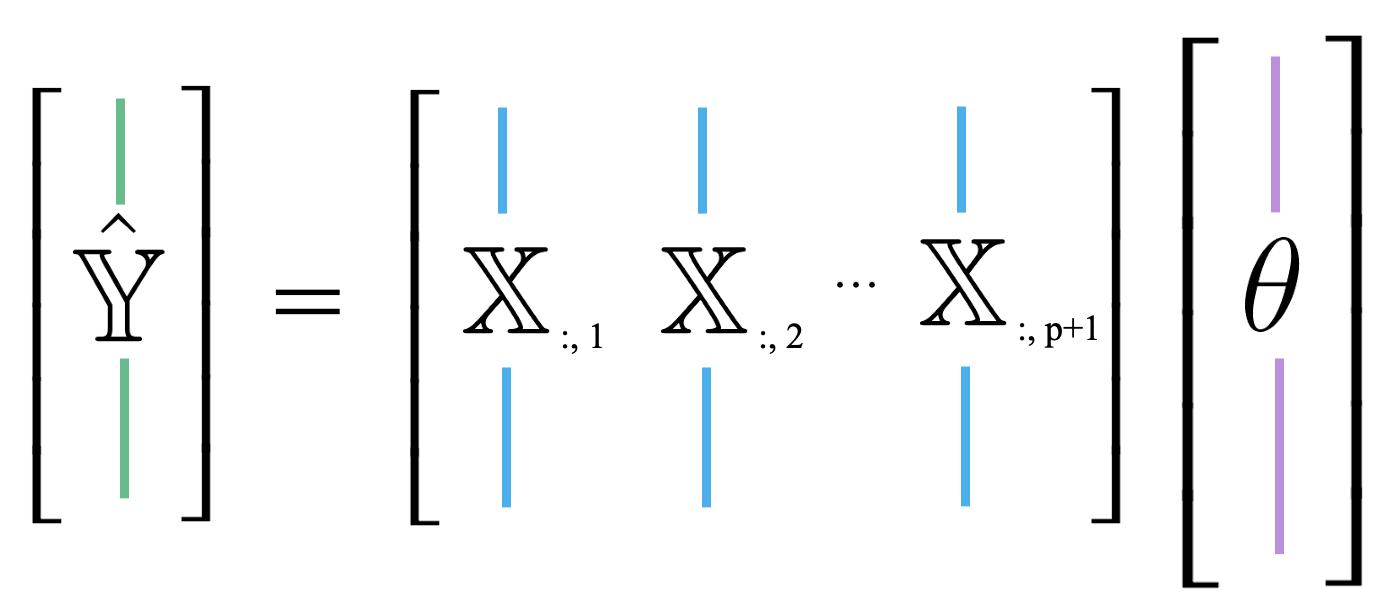

Up until now, we’ve mostly thought of our model as a scalar product between horizontally stacked observations and the parameter vector. We can also think of as a linear combination of feature vectors, scaled by the parameters. We use the notation to denote the th column of the design matrix. You can think of this as following the same convention as used when calling .iloc and .loc. “:” means that we are taking all entries in the th column.

This new approach is useful because it allows us to take advantage of the properties of linear combinations.

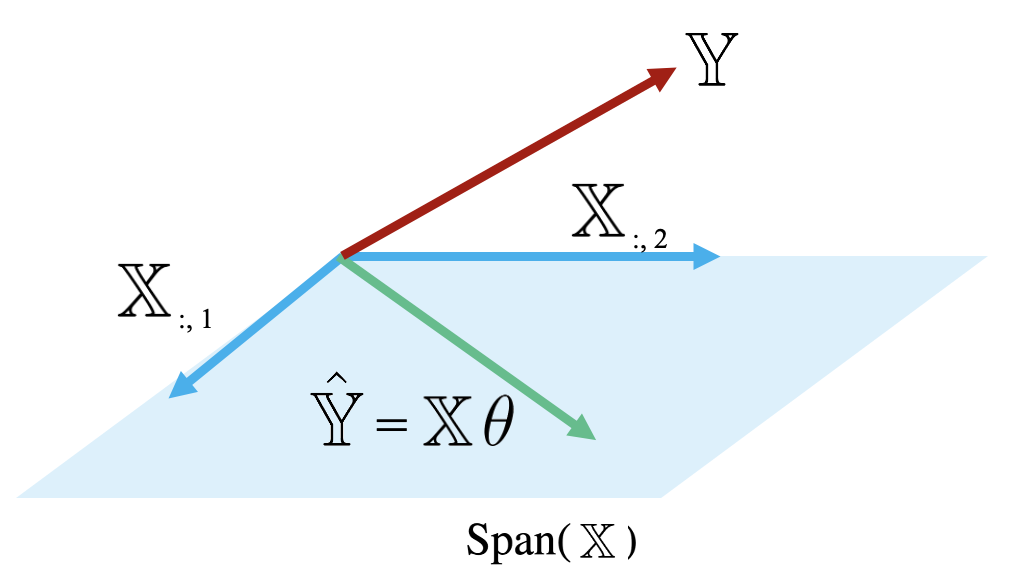

Because the prediction vector, , is a linear combination of the columns of , we know that the predictions are contained in the span of . That is, we know that .

The diagram below is a simplified view of , assuming that each column of has length . Notice that the columns of define a subspace of , where each point in the subspace can be reached by a linear combination of 's columns. The prediction vector lies somewhere in this subspace.

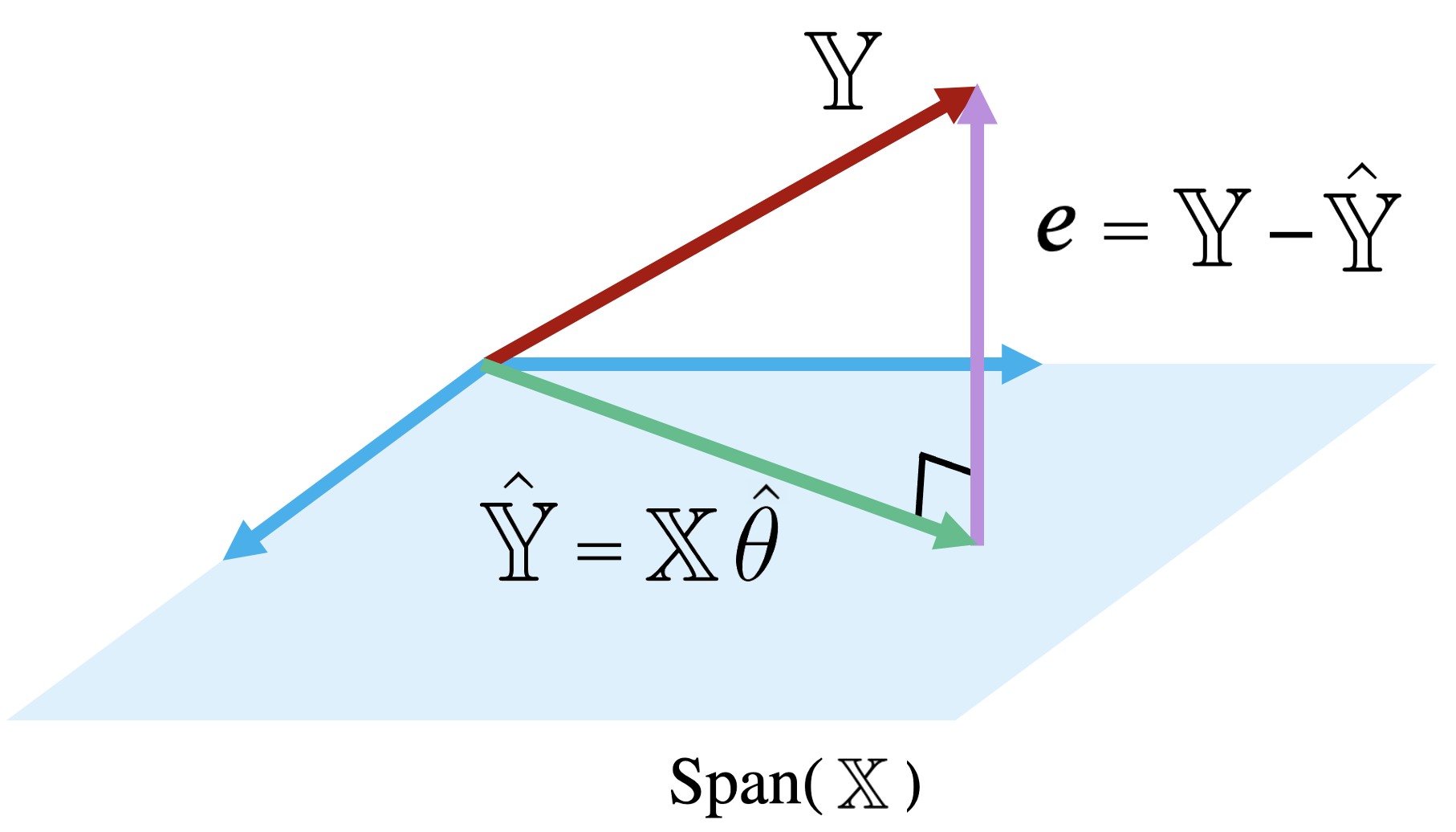

Examining this diagram, we find a problem. The vector of true values, , could theoretically lie anywhere in space – its exact location depends on the data we collect out in the real world. However, our multiple linear regression model can only make predictions in the subspace of spanned by . Remember the model fitting goal we established in the previous section: we want to generate predictions such that the distance between the vector of true values, , and the vector of predicted values, , is minimized. This means that we want to be the vector in that is closest to .

Another way of rephrasing this goal is to say that we wish to minimize the length of the residual vector , as measured by its norm.

The vector in that is closest to is always the orthogonal projection of onto Thus, we should choose the parameter vector that makes the residual vector orthogonal to any vector in . You can visualize this as the vector created by dropping a perpendicular line from onto the span of .

Remember our goal is to find such that we minimize the objective function . Equivalently, this is the such that the residual vector is orthogonal to .

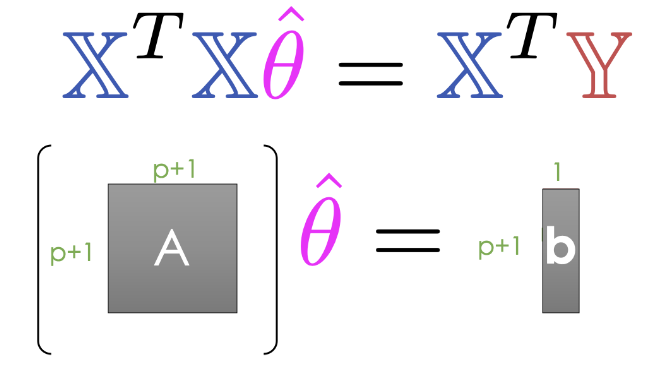

Looking at the definition of orthogonality of to , we can write:

Let’s then rearrange the terms:

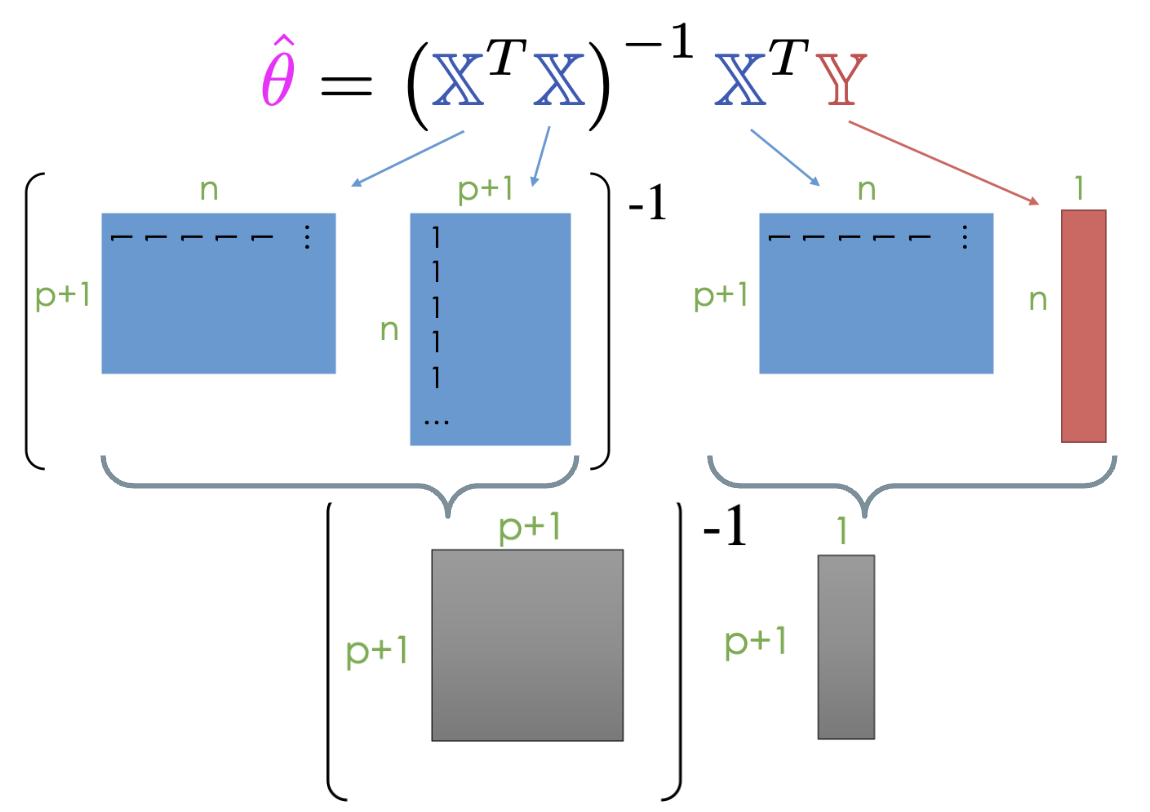

And finally, we end up with the normal equation:

Any vector that minimizes MSE on a dataset must satisfy this equation.

If is invertible, we can conclude:

This is called the least squares estimate of : it is the value of that minimizes the squared loss.

Note that the least squares estimate was derived under the assumption that is invertible. This condition holds true when is full column rank, which, in turn, happens when is full column rank. We will get to the proof for why needs to be full column rank in the OLS Properties section.

OLS Properties¶

Residuals¶

When using the optimal parameter vector, our residuals are orthogonal to .

The Bias/Intercept Term¶

For all linear models with an intercept term, the sum of residuals is zero.

Existence of a Unique Solution¶

To summarize:

| Model | Estimate | Unique? | |

|---|---|---|---|

| Constant Model + MSE | Yes. Any set of values has a unique mean. | ||

| Constant Model + MAE | Yes, if odd. No, if even. Return the average of the middle 2 values. | ||

| Simple Linear Regression + MSE | Yes. Any set of non-constant* values has a unique mean, SD, and correlation coefficient. | ||

| OLS (Linear Model + MSE) | Yes, if is full column rank (all columns are linearly independent, # of datapoints >>> # of features). |

Uniqueness of the OLS Solution¶

In most settings, the number of observations (n) is much greater than the number of features (p).

In practice, instead of directly inverting matrices, we can use more efficient numerical solvers to directly solve a system of linear equations using the normal equation shown below. Note that at least one solution always exists because intuitively, we can always draw a line of best fit for a given set of data, but there may be multiple lines that are “equally good”. (Formal proof is beyond this course.)

The Least Squares estimate is unique if and only if is full column rank.

Therefore, if is not full column rank, we will not have unique estimates. This can happen for two major reasons.

If our design matrix is “wide”:

If n < p, then we have way more features (columns) than observations (rows).

Then = min(n, p+1) < p+1, so is not unique.

Typically we have n >> p so this is less of an issue.

If our design matrix has features that are linear combinations of other features:

By definition, rank of is number of linearly independent columns in .

Example: If “Width”, “Height”, and “Perimeter” are all columns,

Perimeter = 2 * Width + 2 * Height is not full rank.

Important with one-hot encoding (to discuss later).

Evaluating Model Performance¶

Our geometric view of multiple linear regression has taken us far! We have identified the optimal set of parameter values to minimize MSE in a model of multiple features. Now, we want to understand how well our fitted model performs.

RMSE¶

One measure of model performance is the Root Mean Squared Error, or RMSE. The RMSE is simply the square root of MSE. Taking the square root converts the value back into the original, non-squared units of , which is useful for understanding the model’s performance. A low RMSE indicates more “accurate” predictions – that there is a lower average loss across the dataset.

Residual Plots¶

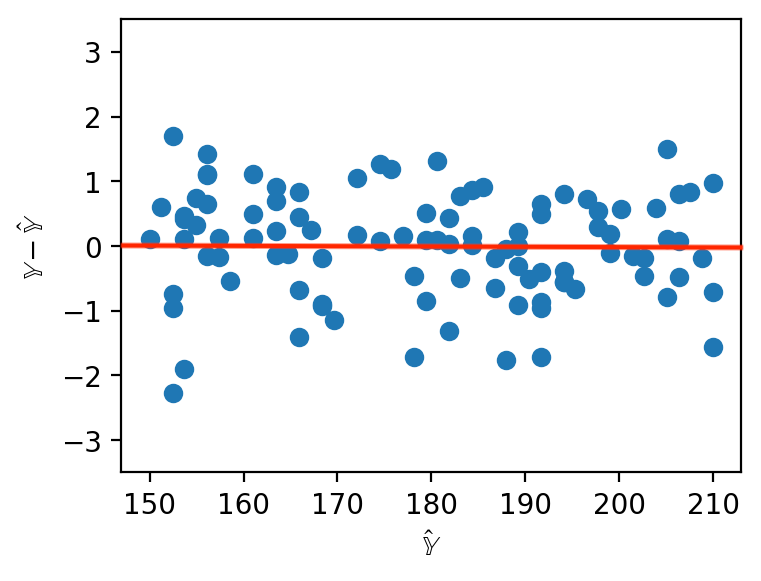

When working with SLR, we generated plots of the residuals against a single feature to understand the behavior of residuals. When working with several features in multiple linear regression, it no longer makes sense to consider a single feature in our residual plots. Instead, multiple linear regression is evaluated by making plots of the residuals against the predicted values. As was the case with SLR, a multiple linear model performs well if its residual plot shows no patterns.

Multiple ¶

For SLR, we used the correlation coefficient to capture the association between the target variable and a single feature variable. In a multiple linear model setting, we will need a performance metric that can account for multiple features at once. Multiple , also called the coefficient of determination, is the proportion of variance of our fitted values (predictions) to our true values . It ranges from 0 to 1 and is effectively the proportion of variance in the observations that the model explains.

Note that for OLS with an intercept term, for example , is equal to the square of the correlation between and . On the other hand for SLR, is equal to , the correlation between and . The proof of these last two properties is out of scope for this course.

Additionally, as we add more features, our fitted values tend to become closer and closer to our actual values. Thus, increases.

Adding more features doesn’t always mean our model is better though! We’ll see why later in the course.