Up until this point in the semester, we’ve focused on analyzing datasets. We’ve looked into the early stages of the data science lifecycle, focusing on the programming tools, visualization techniques, and data cleaning methods needed for data analysis.

This lecture marks a shift in focus. We will move away from examining datasets to actually using our data to better understand the world. Specifically, the next sequence of lectures will explore predictive modeling: generating models to make some predictions about the world around us. In this lecture, we’ll introduce the conceptual framework for setting up a modeling task. In the next few lectures, we’ll put this framework into practice by implementing various kinds of models.

What is a Model?¶

A model is an idealized representation of a system. A system is a set of principles or procedures according to which something functions. We live in a world full of systems: the procedure of turning on a light happens according to a specific set of rules dictating the flow of electricity. The truth behind how any event occurs is usually complex, and many times the specifics are unknown. The workings of the world can be viewed as its own giant procedure. Models seek to simplify the world and distill them into workable pieces.

Example: We model the fall of an object on Earth as subject to a constant acceleration of due to gravity.

This is an approximate description of a system

It doesn’t account for air resistance, topography, etc

But in practice, it’s accurate enough to be useful!

Reasons for Building Models¶

Why do we want to build models? As far as data scientists and statisticians are concerned, there are three reasons, and each implies a different focus on modeling.

Inference: Make sense of phenomena. For example,

How do parents’ heights relate to children’s heights?

What is the correlation of income and education?

We often want simple and interpretable models to help us understand relationships

Prediction: Make accurate predictions about unseen data. Some examples include:

Is an email spam or not?

Generate a summary of a 10-page long article

When making prediction, we care more about making extremely accurate predictions, at the cost of having a less interpretable or black-box model. Uninterpretable models are common in fields like deep learning.

Causality: Assess whether one thing causes something else. For example,

Does smoking cause lung cancer?

Does a job training program increase in employment and wages?

This is a much harder question! Most statistical tools are designed to infer association, not causation. We will not focus on this task in Data 100, but you can take other advanced classes on causal inference (e.g., Stat 156, Data 102) if you are intrigued!

Most of the time, we aim to strike a balance between building interpretable models and building accurate models.

Note that these three reasons can overlap! The distinctions are not always clear cut.

[NOT IN SCOPE] Common Types of Models¶

In general, models can be split into two categories:

Deterministic physical (mechanistic) models: Laws that govern how the world works.

Kepler’s Third Law of Planetary Motion (1619): The ratio of the square of an object’s orbital period with the cube of the semi-major axis of its orbit is the same for all objects orbiting the same primary.

Newton’s Laws: motion and gravitation (1687): Newton’s second law of motion models the relationship between the mass of an object and the force required to accelerate it.

Probabilistic models: Models that attempt to understand how random processes evolve. These are more general and can be used to describe many phenomena in the real world. These models commonly make simplifying assumptions about the nature of the world.

Poisson Process models: Used to model random events that happen with some probability at any point in time and are strictly increasing in count, such as the arrival of customers at a store.

Note: These specific models are not in the scope of Data 100 and exist to serve as motivation.

Simple Linear Regression¶

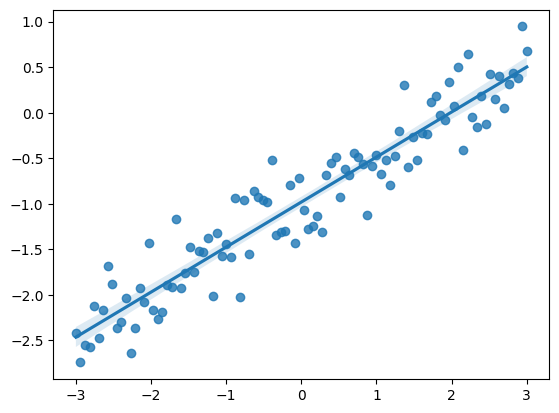

The regression line is the unique straight line that minimizes the mean squared error of estimation among all straight lines. As with any straight line, it can be defined by a slope and a y-intercept:

Click to see the code

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

import seaborn as sns

# Set random seed for consistency

np.random.seed(43)

plt.style.use('default')

# Generate random noise for plotting

x = np.linspace(-3, 3, 100)

y = x * 0.5 - 1 + np.random.randn(100) * 0.3

# Plot regression line

sns.regplot(x=x,y=y);

Notations and Definitions¶

For a pair of variables and representing our data , we denote their means/averages as and and standard deviations as and .

Standard Units¶

A variable is represented in standard units if the following are true:

0 in standard units is equal to the mean () in the original variable’s units.

An increase of 1 standard unit is an increase of 1 standard deviation () in the original variable’s units.

To convert a variable into standard units, we subtract its mean from it and divide it by its standard deviation. For example, in standard units is .

Correlation¶

The correlation () is the average of the product of and , both measured in standard units.

In general,

However, when , , , or (which are all satisfied when x and y are both in standard units),

This simpler formula is convenient to work with when possible.

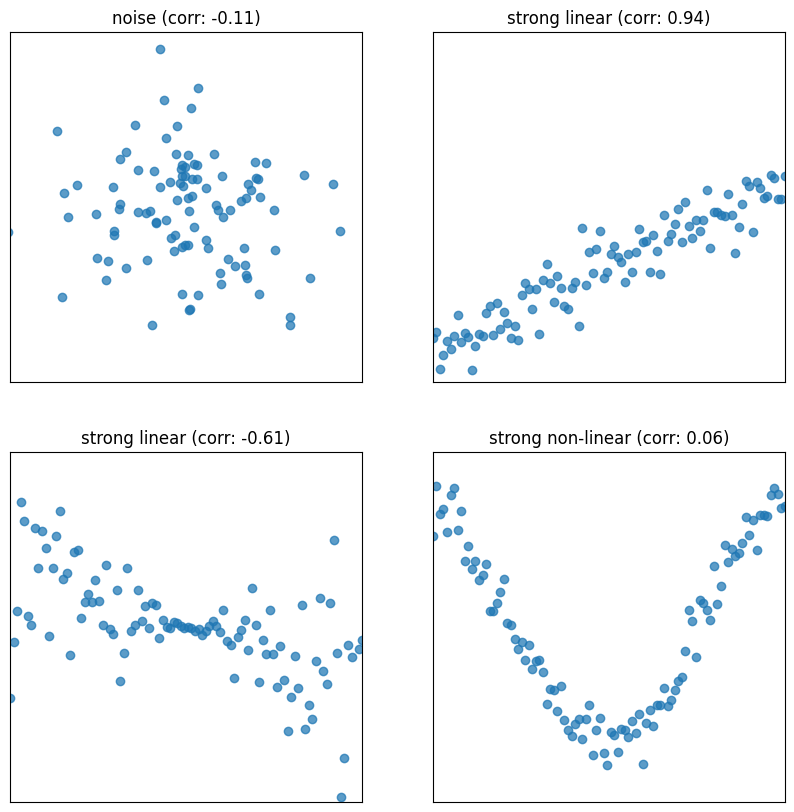

Correlation measures the strength of a linear association between two variables.

Correlations range between -1 and 1: , with indicating perfect positive linear association, and indicating perfect negative association. The closer is to 0, the weaker the linear association is.

Correlation says nothing about causation and non-linear association. Correlation does not imply causation. When , the two variables are uncorrelated. However, they could still be related through some non-linear relationship.

For an intuitive understanding of correlation, when and have the same sign (in standard units), the (, ) pair contributes positively to correlation. Opposite signs contrinbute negatively.

Click to see the code

def plot_and_get_corr(ax, x, y, title):

ax.set_xlim(-3, 3)

ax.set_ylim(-3, 3)

ax.set_xticks([])

ax.set_yticks([])

ax.scatter(x, y, alpha = 0.73)

r = np.corrcoef(x, y)[0, 1]

ax.set_title(title + " (corr: {})".format(r.round(2)))

return r

fig, axs = plt.subplots(2, 2, figsize = (10, 10))

# Just noise

x1, y1 = np.random.randn(2, 100)

corr1 = plot_and_get_corr(axs[0, 0], x1, y1, title = "noise")

# Strong linear

x2 = np.linspace(-3, 3, 100)

y2 = x2 * 0.5 - 1 + np.random.randn(100) * 0.3

corr2 = plot_and_get_corr(axs[0, 1], x2, y2, title = "strong linear")

# Unequal spread

x3 = np.linspace(-3, 3, 100)

y3 = - x3/3 + np.random.randn(100)*(x3)/2.5

corr3 = plot_and_get_corr(axs[1, 0], x3, y3, title = "strong linear")

extent = axs[1, 0].get_window_extent().transformed(fig.dpi_scale_trans.inverted())

# Strong non-linear

x4 = np.linspace(-3, 3, 100)

y4 = 2*np.sin(x3 - 1.5) + np.random.randn(100) * 0.3

corr4 = plot_and_get_corr(axs[1, 1], x4, y4, title = "strong non-linear")

plt.show()

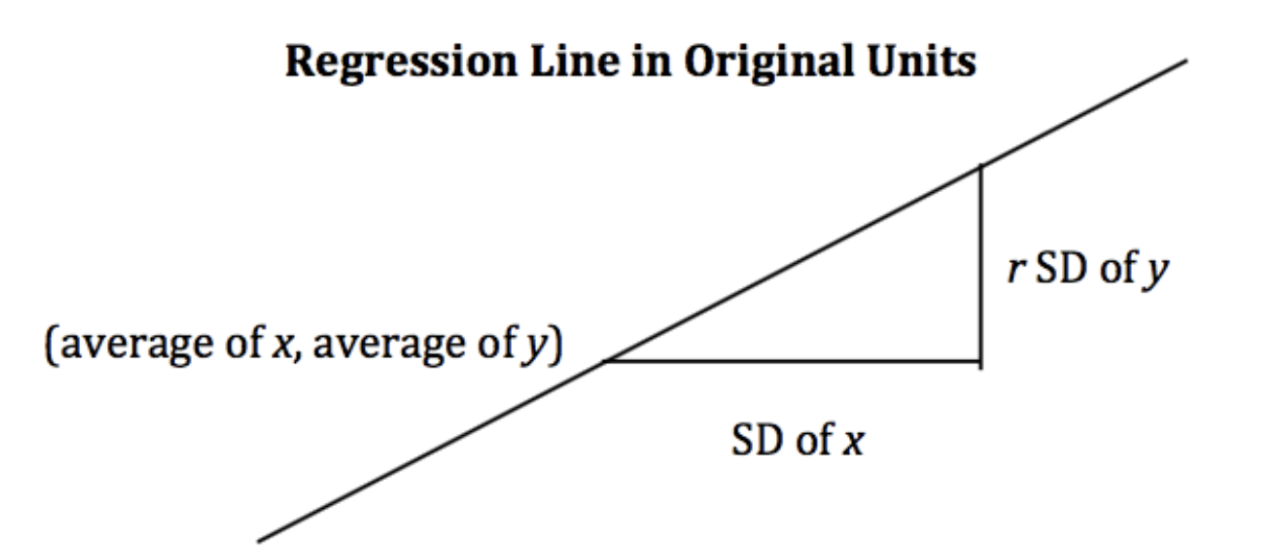

Alternate Form¶

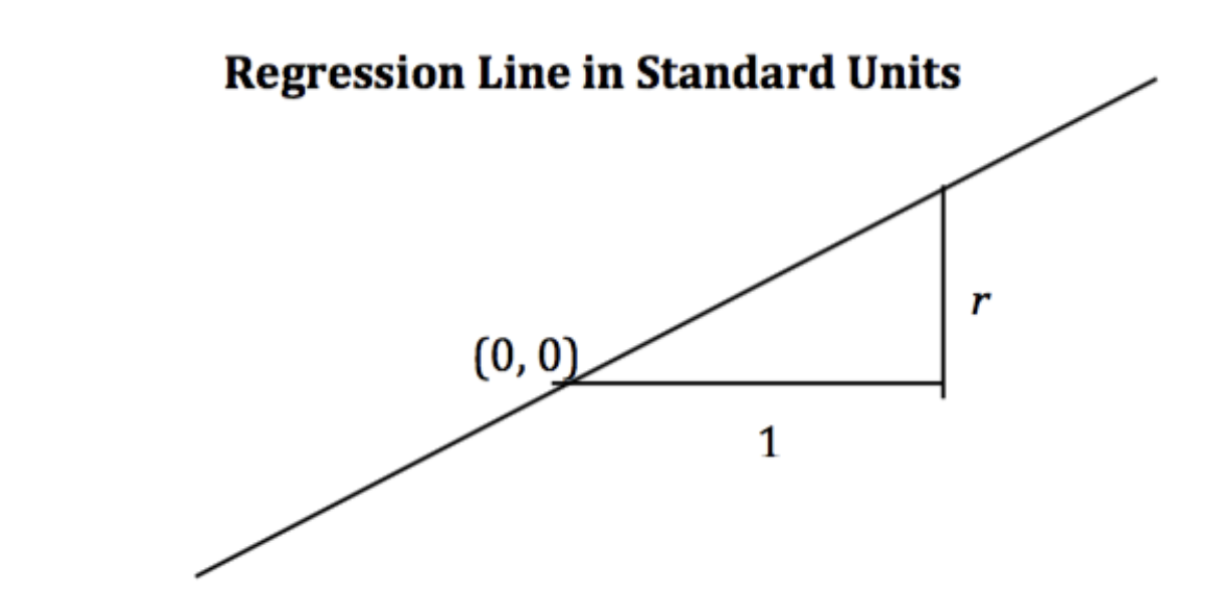

When the variables and are measured in standard units, the regression line for predicting based on has slope and passes through the origin.

Notice that when r = 1, we have perfect prediction!

In the original units, this becomes

Derivation¶

Starting from the top, we have our claimed form of the regression line, and we want to show that it is equivalent to the optimal linear regression line: .

Recall:

The Modeling Process¶

At a high level, a model is a way of representing a system. In Data 100, we’ll treat a model as some mathematical rule we use to describe the relationship between variables.

What variables are we modeling? Typically, we use a subset of the variables in our sample of collected data to model another variable in this data. To put this more formally, say we have the following dataset :

Each pair of values represents a datapoint. In a modeling setting, we call these observations. is the dependent variable we are trying to model, also called an output or response. is the independent variable inputted into the model to make predictions, also known as a feature.

Our goal in modeling is to use the observed data to predict the output variable . We denote each prediction as (read: “y hat sub i”).

How do we generate these predictions? Some examples of models we’ll encounter in the next few lectures are given below:

The examples above are known as parametric models. They relate the collected data, , to the prediction we make, . A few parameters (, , ) are used to describe the relationship between and .

Notice that we don’t immediately know the values of these parameters. While the features, , are taken from our observed data, we need to decide what values to give , , and ourselves. This is the heart of parametric modeling: what parameter values should we choose so our model makes the best possible predictions?

is an estimate of a parameter based on a sample. The “hat” denotes an estimated or predicted quantity. For example, is a prediction. is estimated from data.

Before we move on, note that not all statistical models have parameters! k-Nearest Neighbor classifiers (from Data 8) and KDEs are non-parametric models.

To choose our model parameters, we’ll work through the modeling process.

Choose a model: How should we represent the world?

Choose a loss function: How do we quantify prediction error?

Fit the model: How do we choose the best parameters of our model given our data?

Evaluate model performance: How do we evaluate whether this process gave rise to a good model?

Choosing a Model¶

Our first step is choosing a model: defining the mathematical rule that describes the relationship between the features, , and predictions .

In Data 8, you learned about the Simple Linear Regression (SLR) model. You learned that the model takes the form:

In Data 100, we’ll use slightly different notation: we will replace with and with . This will allow us to use the same notation when we explore more complex models later on in the course.

The parameters of the SLR model are , also called the intercept term, and , also called the slope term. To create an effective model, we want to choose values for and that most accurately predict the output variable. The “best” fitting model parameters are given the special names: and ; they are the specific parameter values that allow our model to generate the best possible predictions.

In Data 8, you learned that the best SLR model parameters are:

A quick reminder on notation:

and indicate the mean value of and , respectively

and indicate the standard deviations of and

is the correlation coefficient, defined as the average of the product of and measured in standard units:

In Data 100, we want to understand how to derive these best model coefficients. To do so, we’ll introduce the concept of a loss function.

Choosing a Loss Function¶

We’ve talked about the idea of creating the “best” possible predictions. This begs the question: how do we decide how “good” or “bad” our model’s predictions are?

A loss function characterizes the cost, error, or fit resulting from a particular choice of model or model parameters. This function, , quantifies how “bad” or “far off” a single prediction by our model is from a true, observed value in our collected data.

The choice of loss function for a particular model will affect the accuracy and computational cost of estimation, and it’ll also depend on the estimation task at hand. For example,

Are outputs quantitative or qualitative?

Do outliers matter?

Are all errors equally costly? (e.g., a false negative on a cancer test is arguably more dangerous than a false positive)

Regardless of the specific function used, a loss function should follow two basic principles:

If the prediction is close to the actual value , loss should be low.

If the prediction is far from the actual value , loss should be high.

Two common choices of loss function are squared loss and absolute loss.

Squared loss, also known as L2 loss, computes loss as the square of the difference between the observed and predicted :

Absolute loss, also known as L1 loss, computes loss as the absolute difference between the observed and predicted :

L1 and L2 loss give us a tool for quantifying our model’s performance on a single data point. This is a good start, but ideally, we want to understand how our model performs across our entire dataset. A natural way to do this is to compute the average loss across all data points in the dataset. This is known as the cost function, :

The cost function has many names in the statistics literature. You may also encounter the terms:

Empirical risk (this is why we give the cost function the name )

Error function

Average loss

We can substitute our L1 and L2 loss into the cost function definition. The Mean Squared Error (MSE) is the average squared loss across a dataset:

The Mean Absolute Error (MAE) is the average absolute loss across a dataset:

Fitting the Model¶

Now that we’ve established the concept of a loss function, we can return to our original goal of choosing model parameters. Specifically, we want to choose the best set of model parameters that will minimize the model’s cost on our dataset. This process is called fitting the model.

We know from calculus that a function is minimized when (1) its first derivative is equal to zero and (2) its second derivative is positive. We often call the function being minimized the objective function (our objective is to find its minimum).

To find the optimal model parameter, we:

Take the derivative of the cost function with respect to that parameter

Set the derivative equal to 0

Solve for the parameter

We repeat this process for each parameter present in the model. For now, we’ll disregard the second derivative condition.

To help us make sense of this process, let’s put it into action by deriving the optimal model parameters for simple linear regression using the mean squared error as our cost function. Remember: although the notation may look tricky, all we are doing is following the three steps above!

Step 1: take the derivative of the cost function with respect to each model parameter. We substitute the SLR model, , into the definition of MSE above and differentiate with respect to and .

Let’s walk through these derivations in more depth, starting with the derivative of MSE with respect to .

Given our MSE above, we know that:

Noting that the derivative of sum is equivalent to the sum of derivatives, this then becomes:

We can then apply the chain rule.

Finally, we can simplify the constants, leaving us with our answer.

Following the same procedure, we can take the derivative of MSE with respect to .

Step 2: set the derivatives equal to 0. After simplifying terms, this produces two estimating equations. The best set of model parameters must satisfy these two optimality conditions.

Step 3: solve the estimating equations to compute estimates for and .

Taking the first equation gives the estimate of :

With a bit more maneuvering, the second equation gives the estimate of . Start by multiplying the first estimating equation by , then subtracting the result from the second estimating equation.

Next, plug in :

By using the definition of correlation and standard deviation , we can conclude:

Just as was given in Data 8!

Remember, this derivation found the optimal model parameters for SLR when using the MSE cost function. If we had used a different model or different loss function, we likely would have found different values for the best model parameters. However, regardless of the model and loss used, we can always follow these three steps to fit the model.

Evaluating the SLR Model¶

Now that we’ve explored the mathematics behind (1) choosing a model, (2) choosing a loss function, and (3) fitting the model, we’re left with one final question – how “good” are the predictions made by this “best” fitted model? To determine this, we can:

Visualize data and compute statistics:

Plot the original data.

Compute each column’s mean and standard deviation. If the mean and standard deviation of our predictions are close to those of the original observed ’s, we might be inclined to say that our model has done well.

(If we’re fitting a linear model) Compute the correlation . A large magnitude for the correlation coefficient between the feature and response variables could also indicate that our model has done well.

Performance metrics:

We can take the Root Mean Squared Error (RMSE).

It’s the square root of the mean squared error (MSE), which is the average loss that we’ve been minimizing to determine optimal model parameters.

RMSE is in the same units as .

A lower RMSE indicates more “accurate” predictions, as we have a lower “average loss” across the data.

Visualization:

Look at the residual plot of to visualize the difference between actual and predicted values. The good residual plot should not show any pattern between input/features and residual values .

To illustrate this process, let’s take a look at Anscombe’s quartet.

Four Mysterious Datasets (Anscombe’s quartet)¶

Let’s take a look at four different datasets.

Click to see the code

Big font helper¶

def adjust_fontsize(size=None): SMALL_SIZE = 8 MEDIUM_SIZE = 10 BIGGER_SIZE = 12 if size != None: SMALL_SIZE = MEDIUM_SIZE = BIGGER_SIZE = size

plt.rc("font", size=SMALL_SIZE) # controls default text sizes

plt.rc("axes", titlesize=SMALL_SIZE) # fontsize of the axes title

plt.rc("axes", labelsize=MEDIUM_SIZE) # fontsize of the x and y labels

plt.rc("xtick", labelsize=SMALL_SIZE) # fontsize of the tick labels

plt.rc("ytick", labelsize=SMALL_SIZE) # fontsize of the tick labels

plt.rc("legend", fontsize=SMALL_SIZE) # legend fontsize

plt.rc("figure", titlesize=BIGGER_SIZE) # fontsize of the figure titleHelper functions¶

def standard_units(x): return (x - np.mean(x)) / np.std(x)

def correlation(x, y): return np.mean(standard_units(x) * standard_units(y))

def slope(x, y): return correlation(x, y) * np.std(y) / np.std(x)

def intercept(x, y): return np.mean(y) - slope(x, y) * np.mean(x)

def fit_least_squares(x, y): theta_0 = intercept(x, y) theta_1 = slope(x, y) return theta_0, theta_1

def predict(x, theta_0, theta_1): return theta_0 + theta_1 * x

def compute_mse(y, yhat): return np.mean((y - yhat) ** 2)

plt.style.use(“default”) # Revert style to default mpl

Click to see the code

plt.style.use("default") # Revert style to default mpl

NO_VIZ, RESID, RESID_SCATTER = range(3)

def least_squares_evaluation(x, y, visualize=NO_VIZ):

# statistics

print(f"x_mean : {np.mean(x):.2f}, y_mean : {np.mean(y):.2f}")

print(f"x_stdev: {np.std(x):.2f}, y_stdev: {np.std(y):.2f}")

print(f"r = Correlation(x, y): {correlation(x, y):.3f}")

# Performance metrics

ahat, bhat = fit_least_squares(x, y)

yhat = predict(x, ahat, bhat)

print(f"\theta_0: {ahat:.2f}, \theta_1: {bhat:.2f}")

print(f"RMSE: {np.sqrt(compute_mse(y, yhat)):.3f}")

# visualization

fig, ax_resid = None, None

if visualize == RESID_SCATTER:

fig, axs = plt.subplots(1, 2, figsize=(8, 3))

axs[0].scatter(x, y)

axs[0].plot(x, yhat)

axs[0].set_title("LS fit")

ax_resid = axs[1]

elif visualize == RESID:

fig = plt.figure(figsize=(4, 3))

ax_resid = plt.gca()

if ax_resid is not None:

ax_resid.scatter(x, y - yhat, color="red")

ax_resid.plot([4, 14], [0, 0], color="black")

ax_resid.set_title("Residuals")

return figClick to see the code

# Load in four different datasets: I, II, III, IV

x = [10, 8, 13, 9, 11, 14, 6, 4, 12, 7, 5]

y1 = [8.04, 6.95, 7.58, 8.81, 8.33, 9.96, 7.24, 4.26, 10.84, 4.82, 5.68]

y2 = [9.14, 8.14, 8.74, 8.77, 9.26, 8.10, 6.13, 3.10, 9.13, 7.26, 4.74]

y3 = [7.46, 6.77, 12.74, 7.11, 7.81, 8.84, 6.08, 5.39, 8.15, 6.42, 5.73]

x4 = [8, 8, 8, 8, 8, 8, 8, 19, 8, 8, 8]

y4 = [6.58, 5.76, 7.71, 8.84, 8.47, 7.04, 5.25, 12.50, 5.56, 7.91, 6.89]

anscombe = {

"I": pd.DataFrame(list(zip(x, y1)), columns=["x", "y"]),

"II": pd.DataFrame(list(zip(x, y2)), columns=["x", "y"]),

"III": pd.DataFrame(list(zip(x, y3)), columns=["x", "y"]),

"IV": pd.DataFrame(list(zip(x4, y4)), columns=["x", "y"]),

}

# Plot the scatter plot and line of best fit

fig, axs = plt.subplots(2, 2, figsize=(10, 10))

for i, dataset in enumerate(["I", "II", "III", "IV"]):

ans = anscombe[dataset]

x, y = ans["x"], ans["y"]

ahat, bhat = fit_least_squares(x, y)

yhat = predict(x, ahat, bhat)

axs[i // 2, i % 2].scatter(x, y, alpha=0.6, color="red") # plot the x, y points

axs[i // 2, i % 2].plot(x, yhat) # plot the line of best fit

axs[i // 2, i % 2].set_xlabel(f"$x_{i+1}$")

axs[i // 2, i % 2].set_ylabel(f"$y_{i+1}$")

axs[i // 2, i % 2].set_title(f"Dataset {dataset}")

plt.show()

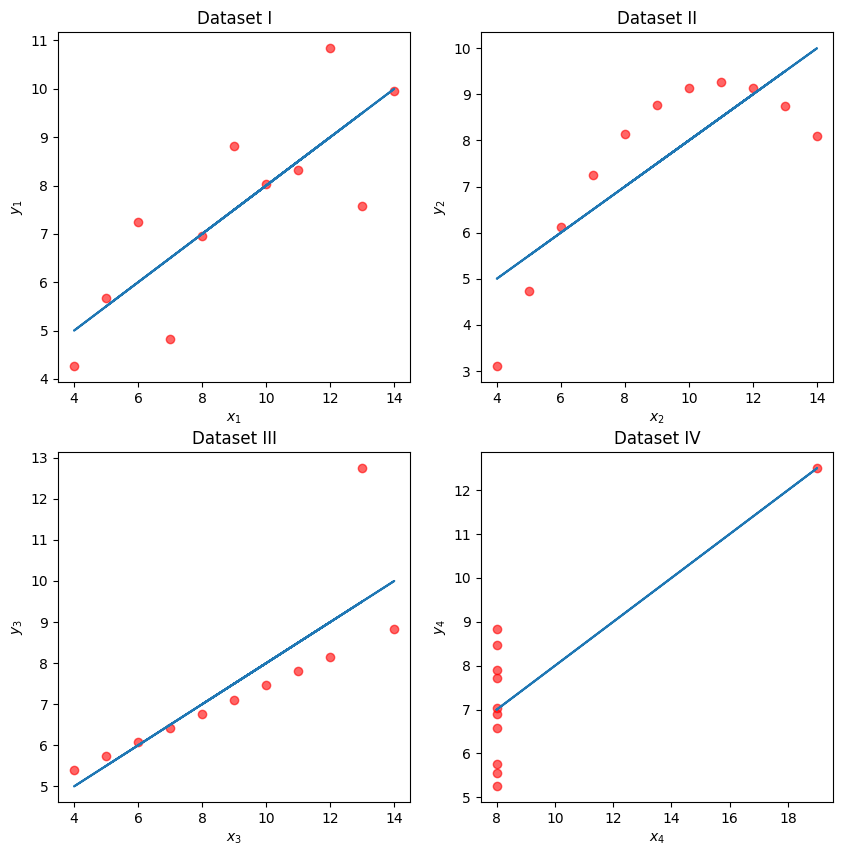

While these four sets of datapoints look very different, they actually all have identical means , , standard deviations , , correlation , and RMSE! If we only look at these statistics, we would probably be inclined to say that these datasets are similar.

for dataset in ["I", "II", "III", "IV"]:

print(f">>> Dataset {dataset}:")

ans = anscombe[dataset]

fig = least_squares_evaluation(ans["x"], ans["y"], visualize=NO_VIZ)

print()

print()>>> Dataset I:

x_mean : 9.00, y_mean : 7.50

x_stdev: 3.16, y_stdev: 1.94

r = Correlation(x, y): 0.816

heta_0: 3.00, heta_1: 0.50

RMSE: 1.119

>>> Dataset II:

x_mean : 9.00, y_mean : 7.50

x_stdev: 3.16, y_stdev: 1.94

r = Correlation(x, y): 0.816

heta_0: 3.00, heta_1: 0.50

RMSE: 1.119

>>> Dataset III:

x_mean : 9.00, y_mean : 7.50

x_stdev: 3.16, y_stdev: 1.94

r = Correlation(x, y): 0.816

heta_0: 3.00, heta_1: 0.50

RMSE: 1.118

>>> Dataset IV:

x_mean : 9.00, y_mean : 7.50

x_stdev: 3.16, y_stdev: 1.94

r = Correlation(x, y): 0.817

heta_0: 3.00, heta_1: 0.50

RMSE: 1.118

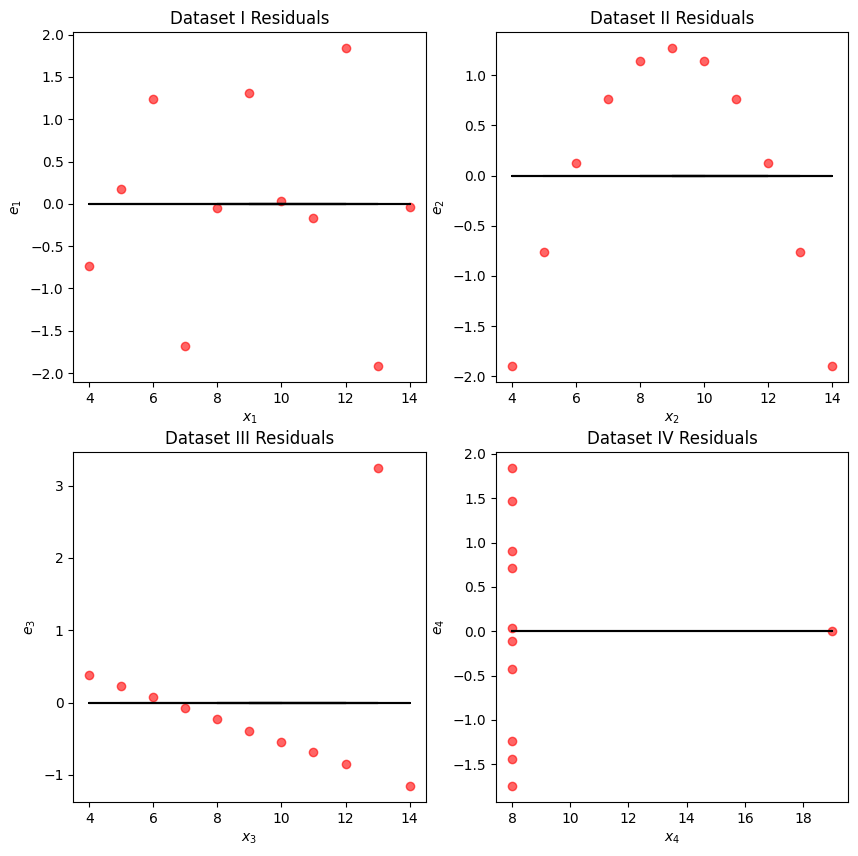

We may also wish to visualize the model’s residuals, defined as the difference between the observed and predicted value (). This gives a high-level view of how “off” each prediction is from the true observed value. Recall that you explored this concept in Data 8: a good regression fit should display no clear pattern in its plot of residuals. The residual plots for Anscombe’s quartet are displayed below. Note how only the first plot shows no clear pattern to the magnitude of residuals. This is an indication that SLR is not the best choice of model for the remaining three sets of points.

Click to see the code

# Residual visualization

fig, axs = plt.subplots(2, 2, figsize=(10, 10))

for i, dataset in enumerate(["I", "II", "III", "IV"]):

ans = anscombe[dataset]

x, y = ans["x"], ans["y"]

ahat, bhat = fit_least_squares(x, y)

yhat = predict(x, ahat, bhat)

axs[i // 2, i % 2].scatter(

x, y - yhat, alpha=0.6, color="red"

) # plot the x, y points

axs[i // 2, i % 2].plot(

x, np.zeros_like(x), color="black"

) # plot the residual line

axs[i // 2, i % 2].set_xlabel(f"$x_{i+1}$")

axs[i // 2, i % 2].set_ylabel(f"$e_{i+1}$")

axs[i // 2, i % 2].set_title(f"Dataset {dataset} Residuals")

plt.show()