Set up Python and Jupyter support for PostgreSQL¶

In a bash shell, add the psycopg2 package to make connections to postgres, and the very handy [ipython-sql] package (https://github.com/catherinedevlin/ipython-sql) to integrate SQL queries into Jupyter notebooks.

% pip install psycopg2

% pip install ipython-sql

Load the ipython-sql magic.

%load_ext sql

%sql postgresql://jmh:@localhost:5432/jmh

SQL: The Query Language¶

The canonical SQL query block includes the following clauses, in the order they appear. Square brackets indicate optional clauses.

SELECT ...

FROM ...

[WHERE ...]

[GROUP BY ...]

[HAVING ...]

[ORDER BY ...]

[LIMIT ...];

Query blocks can reference one or more tables, and be nested in various ways.

Before we worry about multi-table queries or nested queries, we'll work our way through examples that exercise all of these clauses on a single table.

Easy Peasy SQL Basics¶

To start, we are going to define a toy relation (a.k.a. table), populate it with some toy data, and work through some basic SQL. Deeper stuff coming soon though, I promise!

CREATE TABLE¶

First, let's create a table storing information about the professors in this class. This defines the schema of the table.

%%sql

drop table if exists profs;

create table profs (firstname text,

lastname text,

luckynumber integer,

primary key (firstname, lastname));

Note that each column has a fixed data type; PostgreSQL will enforce these types as data is inserted. Note also the definition of a primary key, as we discussed in the Data Wrangling lecture. PostgreSQL will enforce the uniqueness of values in the key columns.

To see what we've done, let's run our first query, dumping out the content of the table: every column for each row. We denote every column with *:

%sql select * from profs;

INSERT VALUES¶

Now let's manually insert some values into the table.

%%sql insert into profs values

('Bin', 'Yu', 3),

('Joseph', 'Gonzalez', 6),

('Joseph', 'Hellerstein', 6),

('Deborah', 'Nolan', 7);

Note how insertions need to have values in the same order as the columns in the create table statement! Let's make sure our data is there:

%sql select * from profs;

And now let's see what happens if we try to mess with that primary key!

%%sql insert into profs values

('Joseph', 'Hellerstein', 7);

UPDATE values¶

OK, we can't have two rows for me, but we can change my lucky number via update:

%%sql update profs

set luckynumber = 7

where firstname = 'Joseph'

and lastname = 'Hellerstein';

And let's check the table now:

%sql select * from profs;

Notice two things:

- The rows may come back in a different order than before. Remember: relations do not have a defined order, and in fact two different orders are just two ways of describing the same relation!

- Note the relational style of the

updatestatement: we decide which rows get updated based entirely on the values in each row, as checked by thewhereclause. There is no notion of any information outside the values in the row--e.g. there are no "object identifiers" or "row numbers"... everything is just the data and only the data.

Simple SELECT Queries¶

Now let's start looking at some slightly more interesting queries.

We can do relational projection--i.e., select specific columns of interest--via the select list:

%%sql

select firstname

from profs;

We can do relational selection--i.e. select specific rows of interest--by adding a where clause:

%%sql

select *

from profs

where luckynumber > 5;

And of course we can specify both rows and columns explicitly. If we have a primary key, we can filter things down to even the cell level via a select list of one column, and a where clause checking equality on the primary key columns:

%%sql

select luckynumber

from profs

where lastname = 'Yu'

and firstname = 'Bin';

Note that even this "single-celled" response still has a uniform data type of a relation. SQL is always manipulating and returning objects of type relation.

Now that you can slice and dice tables into columns, rows and cells, you have enough knowledge to poke around in a database. Let's move on to skills that you'll need as a data scientist.

Working with Real Data in psql¶

In a separate notebook (load_fec.ipynb) you'll find the commands to load publicly-available campaign finance data from the Federal Election Commission into a PostgreSQL database.

To see what we have in the database, it's simplest to use the PostgreSQL shell command psql to interact with the database. You can run man psql to learn more about it. A few handy tips:

psqlsupports some useful non-SQL "meta-"commands, which you access via backslash (\). To find out about them, runpsqlin a bash shell, and at the prompt you can type\?.psqlhas builtin documentation for SQL. To see that, at thepsqlprompt type\help.psqlis an interactive SQL shell, so not suitable for use inside a Jupyter notebook. If you want to invoke it within a Jupyter notebook, you should use!psql -c <SQL statement>-- the-cflag tells psql to run the SQL statement and then exit:

!psql -c "select * from profs;"

Let's see what tables we have our database after loading the FEC data:

!psql -c "\d"

And let's have a look at the individual table's schema:

!psql -c "\d individual"

Browsing Tables: LIMIT and sampling¶

This is not the first topic usually taught in SQL, but it's extremely useful for exploration.

OK, now we have some serious data loaded and we're ready to explore it.

Database tables are often big--hence the use of a database system. When browsing them at first, we may want to look at exemplary rows: e.g., an arbitrary number of rows, or a random sample of the rows.

To look at all of the data in the individual table, we would simply write:

select * \

from individual;

But that would return 39,986,122 rows into our Jupyter notebook's memory, and perhaps overflow the RAM in your computer. Instead, we could limit the size of the output to the first 3 rows as follows:

%%sql

select *

from individual

limit 4;

Some notes on the limit clause:

- Not only does it produce a small output, it's quite efficient: the database system stops iterating over the table after producing the first three rows, saving the work of examining the other nearly 40 million rows.

- Recall that relations have no intrinsic order, so this is some arbitrary choice of 3 rows. Two issues to keep in mind:

- This is a biased choice of rows. Very likely these are the first 3 rows stored in some disk file managed by the database, which may (for example) be the first 3 rows that were entered into the database, so they may not be representative of rows entered later.

- The result is non-deterministic. Given that tables are not guaranteed to have an intrinsic order, it is considered correct for an SQL engine to return any 3 rows that satisfy this query, and return a different 3 rows each time!

As data scientists, we should be concerned about spending much time looking at a biased subset of our data. Instead, we might want an i.i.d. random sample of the rows in the table. There are various methods for sampling from a table. A simple one built into many database systems including PostgreSQL is Bernoulli sampling, in which the decision to return each row is made randomly and independently. As a metaphor, the database engine "flips a coin" for each row to decide whether to return it. We can influence the sampling rate by choosing the probability of a "true" result of the coinflip. This is done on a per-table basis in the FROM clause of the query like so:

%%sql

select *

from individual tablesample bernoulli(.00001);

Three things to note relative to our previous limit construct:

- Bernoulli sampling is slow: it scales linearly with the table size by iterating through every row in the table.

- The number of rows returned by Bernoulli sampling is probabilistic. For a table with $n$ rows and a sampling probability $p$, the output size comes from a binomial distribution with mean $np$ and variance ($np(1-p)$). For a very small $p$, the variance means we could easily get 0 rows back when trying our query!

- If we don't know the size of the table, it's hard to choose a practical sampling probability. First we want to count up the number of rows $n$ (see the discussion of aggregation queries below), to inform us of a good $p$ to choose to get our desired output size. That means yet another full pass of the table to compute the count before we compute the sample!

For these reasons, if we want a proper i.i.d sample, it's a good idea to compute a nice-sized sample and store it elsewhere, keeping it reasonably large for more general use. Since we will not be updating and rows in our individual table, we can do this without worrying that the sample will get "out of date" with respect to the context of individual.

We can use the CREATE TABLE AS SELECT ... (a.k.a. CTAS) pattern to do create a table that saves the output of a query:

%%sql

-- drop table if exists indiv_sample;

create table indiv_sample as

select *

from individual tablesample bernoulli(.03);

Selecting rows and columns, and calling scalar (per-row) functions.¶

OK, we already had a peek at the individual table. Now let's look at specific attributes (columns) relates to who is donating how much.

In addition to referencing the columns of individual in the select clause, we can also derive new columns by writing field-level (so-called "scalar") functions. Typically we reference some table columns in those functions.

In our case, let's compute the log of transaction_amt for subsequent plotting. SQL comes with many typical functions you can use in this way, and PostgreSQL is particularly rich on this front; see the PostgreSQL manual for details.

We'll look at indiv_sample rather than individual while we're just exploring.

%%sql

select name, state, cmte_id,

transaction_amt, log(transaction_amt)

from indiv_sample

limit 10;

CASE statements: SQL conditionals in the FROM clause¶

What about smaller donations?

%%sql

select name, state, cmte_id,

transaction_amt, log(transaction_amt)

from indiv_sample

WHERE transaction_amt < 10

limit 10;

Uh oh, log is not defined for numbers <= 0! We need a conditional statement in the select clause to decide what function to call. We can use SQL's case construct for that.

%%sql select name, state, cmte_id, transaction_amt,

CASE WHEN transaction_amt > 0 THEN log(transaction_amt)

WHEN transaction_amt = 0 THEN 0

ELSE -1*(log(abs(transaction_amt)))

END

from indiv_sample

where transaction_amt < 10

limit 10;

To make things more readable, let's rename the derived column using an AS clause:

%%sql select name, state, cmte_id, transaction_amt,

case when transaction_amt > 0 then log(transaction_amt)

when transaction_amt = 0 then 0

else -1*(log(abs(transaction_amt)))

end AS log_magnitude

from indiv_sample

where transaction_amt < 10

limit 10;

Selecting rows: more interesting WHERE clauses¶

We can choose which rows do and do not appear in the query by putting boolean-valued expressions ("predicates") in the WHERE clause, right after the FROM clause. For example, we might be looking for big donations greater than $1000:

%%sql select name, city, state, transaction_amt

from individual

WHERE transaction_amt > 1000

limit 10;

We can form more complex predicates using Boolean connectives AND, OR and NOT:

%%sql select name, city, state, transaction_amt

from individual

WHERE transaction_amt > 1000

AND (state = 'WI' OR state = 'IL')

AND NOT (city = 'CHICAGO')

limit 10;

Data Generation¶

Sometimes it's useful to auto-generate data in queries, rather than examine data in the database. This is nice for testing, but also can be useful to play some computational tricks as you'll see in your homework.

SQL has a simple scalar function called random that returns a random value between 0.0 and 1.0. You can use this if you need to generate a column of random numbers. (The PostgreSQL manual doesn't promise much about the statistical properties of this random number generator.)

Let's see if any Professor rolls their lucky number on a 10-sided die. (Side-question: is this query really implementing a 10-sided die? If not, what would be a better query?)

%%sql select *,

round(random()*10) as roll_dice

from profs;

Suppose we want to generate a whole bunch of random numbers, not tied to any particular stored table -- can we do that in SQL?

SQL has a notion of table-valued functions: functions that return tables, and hence can be used in a FROM clause of a query. The standard table-valued function is called generate_series, and it's much like numpy's arange:

%sql select * from generate_series(1,5);

%sql select * from generate_series(1, 10, 2);

So to generate 5 random real numbers between 0 and 10, we might use this SQL:

%sql select (10*random()) as rando from generate_series(1,5);

And if we want integers, we can use a PostgreSQL typecast operator (postfix ::<type>):

%sql select (10*random())::integer as rando_int from generate_series(1,5);

Now suppose we want to populate a "matrix" relation my_matrix(x, y, val) full of random values. In Python during Lecture 7 we used np.random.randn(3,2).

import numpy as np

# normally distributed random numbers, mean 0 variance 1

np.random.randn(3,2)

In this relational version we need to explicitly generate the x and y values. We can do this via SQL's built-in cartesian product!

%%sql

SELECT rows.x, columns.y, random() AS val

FROM generate_series(0,2) AS rows(x),

generate_series(0,1) AS columns(y);

We may want to store a matrix as a table—in which case we should set up the schema properly to ensure that it remains a legal matrix.

%%sql

DROP TABLE IF EXISTS my_matrix;

CREATE TABLE my_matrix(x integer, y integer, val float, PRIMARY KEY(x,y));

INSERT INTO my_matrix

SELECT rows.x, columns.y, random() AS val

FROM generate_series(0,2) AS rows(x),

generate_series(0,1) AS columns(y);

SELECT * FROM my_matrix;

A few take-aways from the previous cell:

- Notice the schema of

my_matrixreflects the fact thatvalis a function of the row (x) and column (y) IDs. - We've said before that the order of rows in a table isn't defined in SQL. Is this relational representation of a "matrix" faithful to the mathematical definition of a matrix? Why or why not?

- Notice the

INSERTstatement, which contains aSELECTquery rather than theVALUESwe saw before. You might want to experiment and see what would happen if theSELECTquery produces a different schema thanmy_matrix: try having it produce too few columns, too many columns, columns in different orders, etc. - In the

INSERT...SELECTstatement, notice the definition of output column names via theASin theSELECTclause. Is that necessary here? - In the

INSERT...SELECTstatement, notice the definition of table and column names in theFROMclause viaAS, and the way they get referenced in theSELECTclause. Do we need the tablenames specified in theSELECTclause? Try it and see! - Count the rows in the output...does it look good?

User-defined functions (UDFs)¶

Sometimes we may want a custom scalar function that isn't built into SQL. Some database systems allow you to register your own user-defined functions (UDFs) in one or more programming languages. Conveniently, PostgreSQL allows us to register user-defined functions written in Python. Be aware of two things:

Calling Python for each row in a query is quite a bit slower than using the pre-compiled built-in functions in SQL ... this is akin to the use of Python loops instead of

numpycalls. If you can avoid using Python UDFs you should do so to get better performance.Python is a full-feature programming language with access to your operating system's functionality, which means it can reach outside of the scope of the query and wreak havoc, including running arbitrary UNIX commands. (PostgreSQL refers to this as an

untrustedlanguage.) Be very careful with the Python UDFs you use in your Postgres queries! If you want to be safer write UDFs in a trusted language. PostgreSQL has a number of other languages to choose from, including Java and even R!.

First we tell PostgreSQL we want to use the plpythonu package (so named because of "pl" for "programming language", "u" for "untrusted"):

%sql create extension if not exists plpythonu;

Now let's write some trivial Python code and register it as a UDF using the create function command. Since SQL is a typed language, we need to specify the SQL types for the input and output to our function, in addition to the code (within $$ delimiters) and the language:

%%sql

drop function if exists double(x integer);

create function double(x integer) returns integer

as $$

return x + x

$$ language plpythonu;

%%sql select double(10), 10+10 as builtin_double

from generate_series(1,1);

OK let's do a little performance test on a generated table of 1 million rows. To get timing numbers, we'll prefix our queries with EXPLAIN ANALYZE: this tells postgres to return us information about the query execution, rather than returning us actual data.

%sql EXPLAIN ANALYZE select double(10) \

from generate_series(1, 1000000);

%sql explain analyze select 10+10 from generate_series(1, 1000000);

Notice the Execution Time: the UDF makes things how many times slower?

Question on experimental methodology: as data scientists, how should we get more confidence in our understanding of the performance of these queries?

More interesting UDFs¶

As we said above, PostgreSQL already provides many standard arithmetic and string functions. The reason to use a UDF is typically to invoke some more involved procedural code -- e.g. something from an interesting open-source library you don't want to rewrite.

Obviously there are many interesting Python libraries we might like to use in doing our data science. One that's often useful in a SQL context is the Natural Language Tool Kit nltk. One common use of nltk is sentiment analysis: converting full text into a summary feature that describes the "feelings" behind the text.

Ordinarily you'd train your sentiment analysis package (and we'll learn about training models soon!) But in the next example, we'll use a simple pre-trained sentiment analysis package from nltk called Vader.

First, let's see how we use Vader from Python:

from nltk.sentiment.vader import SentimentIntensityAnalyzer

s = SentimentIntensityAnalyzer()

score = s.polarity_scores('Data scientists are the bomb: the sexiest job of the 21st century!!')

score

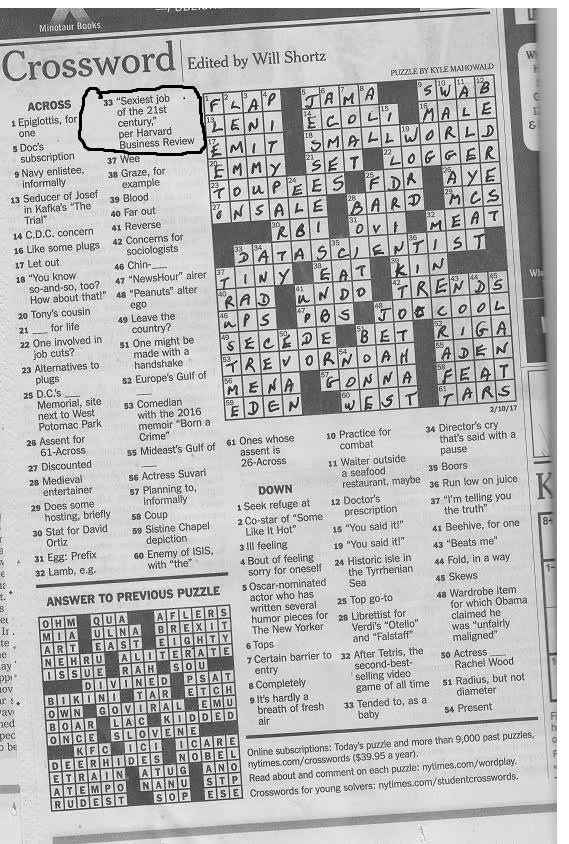

(Yes, this was in the New York Times crossword puzzle on 2/10/17... thanks Mom!)

(Yes, this was in the New York Times crossword puzzle on 2/10/17... thanks Mom!)

Let's boil that down to a single number, the difference between positive and negative

score['pos'] - score['neg']

Now that we've seen what we want in Python, let's register that idea as a Postgres UDF:

%%sql

drop function if exists sentiment(t text);

create function sentiment(t text) returns float

as $$

from nltk.sentiment.vader import SentimentIntensityAnalyzer

s = SentimentIntensityAnalyzer()

scores = s.polarity_scores(t)

return scores['pos'] - scores['neg']

$$

language plpythonu;

%%sql

select name, transaction_amt, memo_text, sentiment(memo_text)

from individual

where memo_text IS NOT NULL

limit 5;

OK, let's look at some tweets -- they'll be more interesting.

%%sql

select timestamp, content, sentiment(content)

from trump

where content IS NOT NULL

limit 10;

Ordering the output¶

As a nicety, SQL allows you to order your output rows, in either ascending (ASC) or descending (DESC) order of the values in columns. For example:

%%sql

select name, city, state, transaction_amt

from indiv_sample

where transaction_amt > 1000

and (state = 'WI' or state = 'IL')

and not (city = 'CHICAGO')

ORDER BY transaction_amt DESC

limit 10;

Note how the combination of ORDER BY and LIMIT 10 gives you the "top 10" results. That's often handy!

You can order by multiple columns, which will leave you with "lexicographic" ordering: it's ordered by the first column, and ties on the first column are ordered by the second column, etc. For example:

%%sql

select name, city, state, transaction_amt

from indiv_sample

where transaction_amt > 2000

and (state = 'WI' or state = 'IL')

and not (city = 'CHICAGO')

order by state DESC, city

limit 10;

Removing duplicates: SELECT DISTINCT¶

As we know, SQL is a multiset logic, preserving the meaning of the number of duplicates in query results. Sometimes, however, we don't want to keep the duplicates, we want to eliminate them. This is done simply by adding the keyword DISTINCT after the SELECT statement:

%%sql

SELECT DISTINCT firstname

FROM profs;

%%sql

SELECT DISTINCT firstname, luckynumber

FROM profs;

Aggregation¶

What's the granularity of our individual table? Transactions? Examining the schema it doesn't look like there's a key for the donor. Maybe the image_num is a key? Or the file_num?

To determine this, we need to count up the total number of rows, and the number of distinct values that occur in the image_num column. SQL provides a family of [aggregate functions] for use in the select clause. In the simplest form, queries with aggregates in the select clause generate a single row of output, with each aggregate function performing a summary of all the rows of input. You can have many aggregate functions in your select clause:

A list of built-in aggregate functions in PostgreSQL is here. In our case, the query we are looking for is as follows. To start with, we'll run it on our sample for a sanity check:

%%sql select count(*) as total_rows,

count(DISTINCT image_num) as image_nums,

count(distinct file_num) as file_nums

from indiv_sample;

Note how the distinct modifier can be used inside an aggregate function: it removes any duplicated values prior to computing the aggregate.

Let's examine some more descriptive statistics of our data using SQL aggregates:

%%sql select count(*), sum(transaction_amt),

avg(transaction_amt), stddev(transaction_amt)

from indiv_sample;

Group By and Aggregation¶

Often we want to partition our input table into groups, and compute an aggregate per group. SQL makes this easy: we use a group by clause to specify our groups:

%%sql select state, count(*), sum(transaction_amt),

avg(transaction_amt), stddev(transaction_amt)

from indiv_sample

GROUP BY state;

Note that we can also group by more than one column. (It does not matter what order the columns appear in the group by clause.) In the absence of a LIMIT clause, would the query below have more rows than the previous one, or fewer?

%%sql select city, state, count(*), sum(transaction_amt),

avg(transaction_amt), stddev(transaction_amt)

from indiv_sample

group by state, city

order by state, city

limit 20;

Legal columns in the select clause of a group by query¶

Note that we can reference the columns city and state from the group by clause in the select list: this makes sense because each row (and each aggregate value) in the output can have at most one city or state associated with it. However it would not make sense to reference name, since there are many names associated with a given row in the output:

%%sql select city, state, name, count(*), sum(transaction_amt),

avg(transaction_amt), stddev(transaction_amt)

from indiv_sample

group by city, state

order by state, city

limit 20;

Read the error message carefully: note that it would have been legal to use name inside an aggregate function, since the aggregate would have taken the many names associated with an output row and summarized them into one value. (Aggregating strings is a bit unusual, but use your imagination!)

Connection to Index variables in math notation (skip in class)¶

There is a direct analogy to index subscripts in familiar math notation. Suppose you have a set of objects $a_{ij}$. Consider the expression $\sum_i a_{ij}$. Which index corresponds to the group by column: $i$ or $j$?

The HAVING Clause in GROUP BY queries¶

We can use the WHERE clause in an aggregate query to filter the rows that are to be aggregated. In relational algebra terms, this is a selection prior to the aggregation, i.e. $\gamma_{c_1...c_m, a_1...a_n}(\sigma_\theta(R))$.

But what about if we want to filter out groups based on a property of the group, say its size? The HAVING clause allows us to do selections after the aggregation, i.e. $\sigma_{\theta_2}(\gamma_{c_1...c_m, a_1...a_n}(R))$. Here, $\theta_2$ can refer to the aggregate properties $a_1, ..., a_n$ computed by $\gamma$.

Let's first use a WHERE clause to focus our aggregates on only large donations.

%%sql select city, state, count(*), sum(transaction_amt),

avg(transaction_amt), stddev(transaction_amt)

from indiv_sample

where transaction_amt > 1000

group by state, city

order by state, city

limit 10;

Now we will using the HAVING clause to only look at cities with more than 10 million dollars in donations:

%%sql select city, state, count(*), sum(transaction_amt),

avg(transaction_amt), stddev(transaction_amt)

from indiv_sample

where transaction_amt > 1000

group by state, city

HAVING sum(transaction_amt) > 10000

order by state, city;

Notice that we have to compute (and type!) sum(transaction_amt) twice. We can clean that up later when we learn about views and common table expressions.

What's up with indiv_sample.image_num?¶

OK, let's return to that query above, and this time identify the duplicates!

%%sql select image_num, count(*) as total_rows

from indiv_sample

group by image_num

having count(*) > 1;

Summary¶

OK, you now have enough information to do basic exploration of the contents of data set, using the equivalent of relational algebra selection and projection, along with niceties around limits, samples, and function invocation. Next time we'll pick up with statistical uses of aggregation, as well as joins.